TL;DR

|

Autonomous vehicles don’t get the luxury of waiting.

At highway speed, a delay of even a few milliseconds can turn a routine situation into something far more serious. A pedestrian stepping off a curb. A car braking suddenly ahead. A lane marking fading in heavy rain. These moments leave no room for hesitation.

This is where edge computing in autonomous vehicles becomes essential.

Instead of sending data off to distant servers and waiting for a response, vehicles process information right where it’s created—inside the car. That shift changes everything. Decisions happen instantly, systems stay functional without connectivity, and the vehicle remains responsive even in unpredictable environments.

The idea sounds straightforward. In practice, it’s a carefully balanced system of hardware, software, and constant trade-offs.

What Is Edge Computing in Autonomous Vehicles?

At its simplest, edge computing in autonomous vehicles means handling data locally—inside the vehicle—rather than depending entirely on the cloud.

Modern vehicles are surrounded by sensors. Cameras watch the road. LiDAR maps depth. Radar tracks motion. All of this generates a constant stream of data. Sending it all elsewhere isn’t just inefficient—it’s too slow.

So the vehicle handles it itself.

In short: decisions are made where the data is generated.

That allows the system to:

- Recognize obstacles immediately

- Adjust steering or speed without delay

- Keep operating even when the network drops

It’s closely tied to edge AI, where trained models run directly on the vehicle’s hardware, interpreting the environment in real time.

How Edge Computing in Autonomous Vehicles Works

From the outside, it feels seamless. Underneath, it’s a layered process happening continuously.

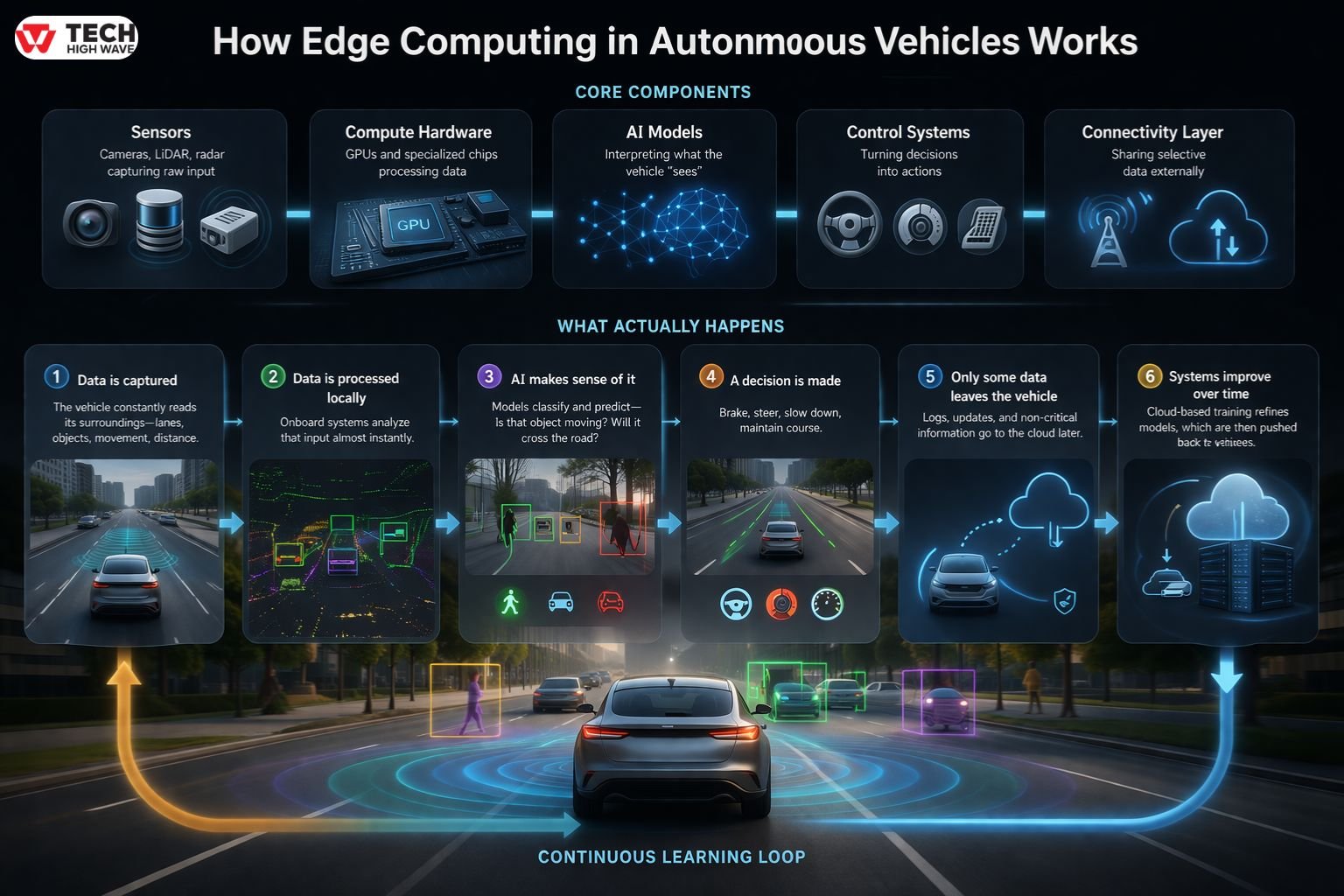

Core Components

- Sensors: Cameras, LiDAR, radar capturing raw input

- Compute hardware: GPUs and specialized chips processing data

- AI models: Interpreting what the vehicle “sees”

- Control systems: Turning decisions into actions

- Connectivity layer: Sharing selective data externally

What Actually Happens

- Data is captured

The vehicle constantly reads its surroundings—lanes, objects, movement, distance. - Data is processed locally

Onboard systems analyze that input almost instantly. This includes identifying vehicles, pedestrians, and road features. - AI makes sense of it

Models classify and predict—Is that object moving? Will it cross the road? - A decision is made

Brake, steer, slow down, maintain course. - Only some data leaves the vehicle

Logs, updates, and non-critical information go to the cloud later. - Systems improve over time

Cloud-based training refines models, which are then pushed back to vehicles.

It sounds linear. In reality, all of this overlaps and repeats constantly.

Edge AI Inference in Autonomous Vehicles

A useful way to understand this is to separate two things: training and inference.

Training happens elsewhere. Inference happens inside the car.

Inference is the moment a system turns input into action.

For example:

- A camera detects movement

- The model identifies a pedestrian

- The system predicts trajectory

- The vehicle responds

All of that happens locally.

There’s no time to ask a remote server what to do.

That said, running models inside a vehicle isn’t trivial. Hardware has limits—power, heat, space. Models have to be efficient, not just accurate. There’s always a balance between performance and practicality.

Key Benefits of Edge Computing in Autonomous Vehicles

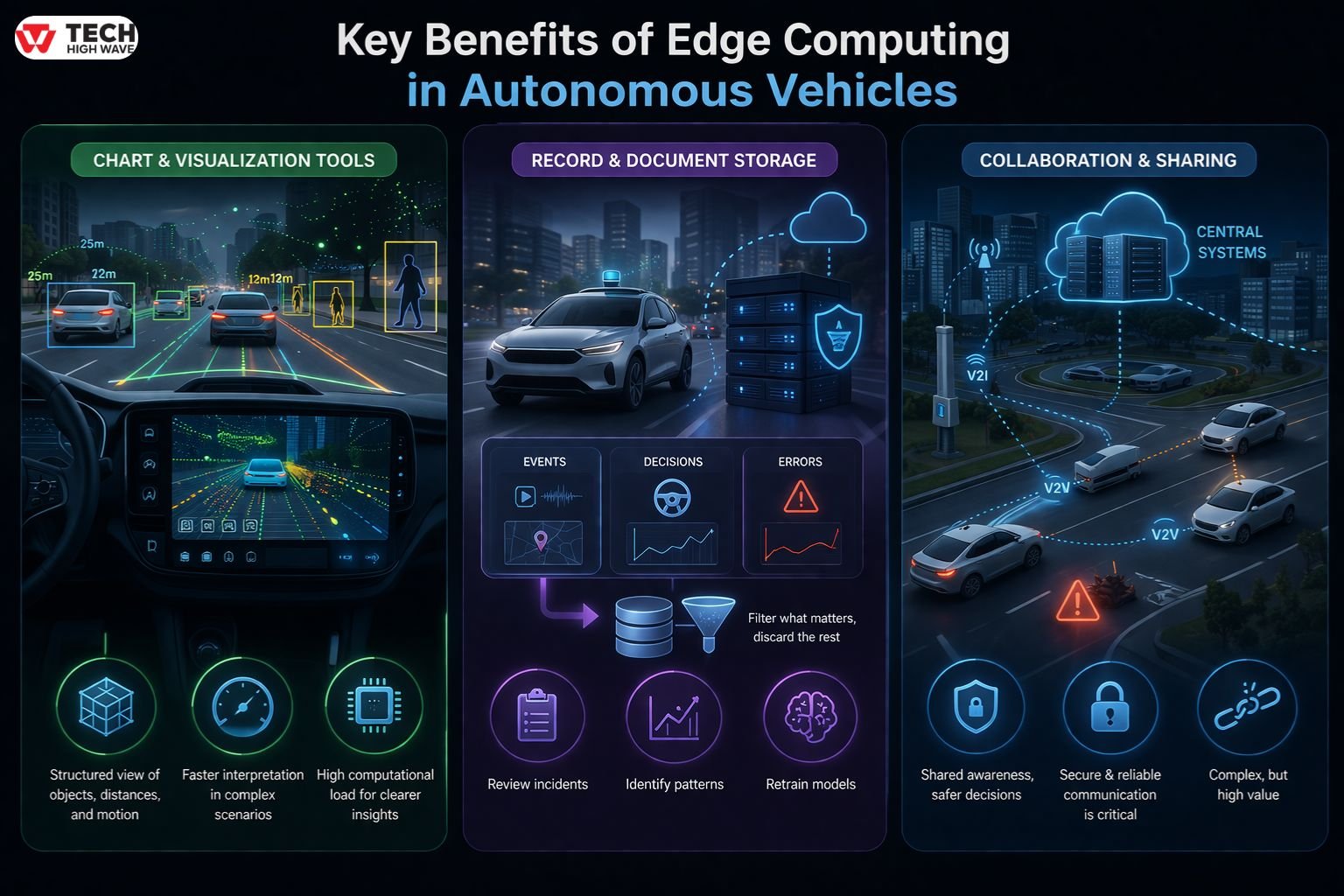

Chart & Visualization Tools

Raw sensor data isn’t useful on its own. It has to be structured.

Vehicles build a kind of internal map—objects, distances, motion paths. Not a visual map for humans, but something the system can act on.

In heavy traffic or low visibility, this structured view matters. It helps the vehicle interpret complex scenes quickly.

The trade-off is computational load. The clearer the “picture,” the more processing it requires.

Record & Document Storage

Autonomous systems log everything—events, errors, decisions.

This isn’t just for debugging. It’s how systems improve.

- Incidents can be reviewed

- Patterns can be identified

- Models can be retrained

That would be overwhelming. The system filters what matters and discards the rest.

Collaboration & Sharing

Vehicles don’t operate in isolation anymore.

They can exchange information:

- With other vehicles (V2V)

- With infrastructure (V2I)

- With centralized systems

This allows for shared awareness. A hazard detected by one vehicle can influence another.

But it introduces complexity too. Data has to be secure. Communication has to be reliable. Otherwise, the benefits disappear quickly.

Best Uses for Edge Computing in Autonomous Vehicles

Some situations make the need for edge computing obvious.

Obstacle detection

At speed, there’s no buffer. Recognition and reaction must be immediate.

Navigation adjustments

Road conditions change constantly. Lane shifts, merging traffic, unexpected obstacles.

Driver assistance systems

Even partial automation—like emergency braking—depends on fast local processing.

Fleet operations

Vehicles can monitor themselves, flag issues early, and reduce downtime.

Urban environments

Interacting with traffic systems, signals, and congestion patterns in real time.

A Simple Scenario

A pedestrian steps into the road.

The vehicle detects motion, identifies a human, predicts movement, and applies the brakes.

No signal is sent. No external confirmation is needed.

It just happens.

What Is Edge Computing? Core Concepts Explained

Edge computing is simply about proximity—processing data closer to where it’s generated.

That proximity changes performance.

Where It Helps Most

- Systems that need immediate responses

- Environments with unstable connectivity

- Applications generating large data volumes

Where It Matters Less

- Non-time-sensitive workloads

- Systems with stable, high-speed connectivity

- Low-data environments

It’s not a universal solution. It’s a situational one.

Also Check: Teijin Automotive Technologies: What It Is and Why It Matters in Modern Car Manufacturing

Edge Computing vs Cloud vs Fog Computing

| Model | Processing Location | Latency | Typical Use Case |

| Cloud Computing | Centralized data centers | Higher | Big data processing, storage, AI training |

| Fog Computing | Regional / intermediary nodes | Medium | Traffic coordination, data aggregation |

| Edge Computing | On-device or near data source | Ultra-low | Real-time control, AV perception |

These models aren’t competing. They work together.

Edge handles immediacy. Cloud handles scale. Fog sits somewhere in between.

Why Autonomous Vehicles Require Edge Computing

There are a few realities that make edge computing unavoidable.

Timing

Decisions can’t wait.

Connectivity

Networks fail. Vehicles still have to operate.

Data volume

There’s simply too much data to send elsewhere continuously.

Reliability

Critical systems can’t depend on external infrastructure.

Where Things Get Complicated

- Hardware can become a bottleneck

- Systems generate heat under load

- Security risks increase at the edge

- Scaling across fleets adds complexity

No system is perfect. Edge computing solves one set of problems while introducing another.

Edge Computing Architecture in Autonomous Vehicles

Most systems follow a layered structure:

- Perception: Understanding the environment

- Planning: Deciding what to do next

- Control: Executing the action

Supporting all of this is the compute layer—specialized hardware designed to process large amounts of data quickly without excessive power consumption.

Balancing performance with thermal limits remains one of the hardest challenges.

Challenges of Edge Computing in Autonomous Vehicles

Edge computing brings trade-offs that are easy to overlook.

- High performance requires energy

- Energy creates heat

- Heat affects reliability

There are also software challenges:

- Models must be optimized

- Updates must be reliable

- Systems must handle edge cases

And then there’s security. Processing data locally increases exposure. Protecting that environment is an ongoing effort.

When Edge Computing Is Necessary in Autonomous Vehicles

Edge computing becomes essential when:

- Decisions must happen instantly

- Connectivity isn’t guaranteed

- Data volumes are too large to transmit

- Safety depends on immediate action

For everything else—analytics, long-term learning—the cloud still plays a role.

Future Trends in Edge Computing in Autonomous Vehicles (2026 Outlook)

Progress in this space isn’t about sudden breakthroughs. It’s incremental.

- AI models are becoming smaller and more efficient

- Hardware is getting faster without increasing power demands significantly

- Security frameworks are improving

- Connectivity is becoming more reliable with newer networks

At the same time, regulations are tightening. Safety, transparency, and reliability are under closer scrutiny.

The direction is clear: systems that are not just faster, but more dependable.

FAQs

Q1: What is edge computing in autonomous vehicles?

Edge computing in autonomous vehicles processes data locally within the vehicle, enabling real-time decisions without relying on external systems.

Q2: Why is edge computing important for self-driving cars?

It is important because it reduces latency and allows immediate responses to changing road conditions.

Q3: How does edge computing differ from cloud computing in vehicles?

Edge computing handles real-time tasks locally, while cloud computing is used for storage, analysis, and model training.

Q4: Can autonomous vehicles function without edge computing?

They cannot operate effectively without it, as real-time decision-making requires local processing.

Q5: What are examples of edge computing applications in autonomous vehicles?

Examples include obstacle detection, lane tracking, emergency braking, and navigation adjustments.

Q6: Is edge computing secure in autonomous vehicles?

It can be secure with proper safeguards, though it introduces additional security challenges compared to centralized systems.

Q7: What is the future of edge computing in autonomous vehicles?

The focus is on efficiency, better hardware, stronger security, and improved integration with connected systems.

Also Read: Software for 3D Scientific Visualization: What People Actually End Up Using in 2026