TL;DR

Choosing the right system requires balancing performance, efficiency, and scalability. |

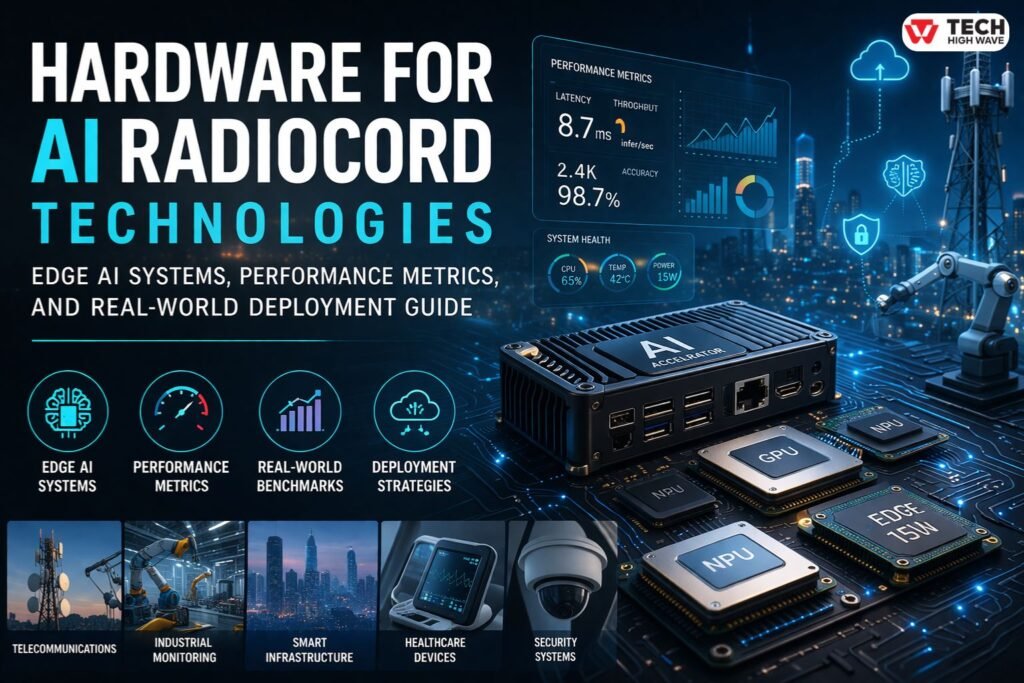

Interest in hardware for AI Radiocord technologies typically emerges when traditional cloud-based AI starts falling short—especially in environments where milliseconds matter. Systems that rely on remote processing often struggle with latency, bandwidth limitations, and inconsistent connectivity.

In practice, this category closely aligns with edge AI hardware, embedded intelligence systems, and AIoT infrastructure designed to process data at or near the source. These systems are commonly used in signal-heavy environments such as telecommunications, industrial monitoring, and smart infrastructure.

Understanding how this hardware performs in real-world conditions—not just in specifications—makes the difference between efficient deployment and costly inefficiencies.

What Is Hardware for AI Radiocord Technologies?

Hardware for AI Radiocord technologies refers to specialized computing systems designed to run AI workloads locally, particularly where continuous data streams, signals, or real-time inputs are involved.

It typically includes:

- Edge AI devices with onboard inference capabilities

- AI accelerators such as NPUs and TPUs

- GPUs optimized for parallel workloads

- Integrated signal processing units with AI capabilities

Rather than a standalone category, it fits within a broader ecosystem of edge AI hardware and embedded AI systems, where low latency, reliability, and efficiency are critical.

How Hardware for AI Radiocord Technologies Works

These systems operate through a pipeline optimized for real-time performance.

Data Input

Sensors, RF modules, or imaging devices generate continuous data streams.

Preprocessing

Noise reduction, filtering, and normalization prepare the data for inference.

AI Inference Engine

Dedicated hardware executes trained models. This is where performance differences become noticeable.

On paper, high TOPS values look impressive. In practice, memory bandwidth and latency often determine actual performance.

Decision Output

The system produces immediate outputs—alerts, classifications, or automated actions.

Selective Cloud Sync

Only critical data is sent to the cloud, reducing bandwidth usage.

In controlled environments, latency stays low. In real deployments, thermal throttling and inconsistent inputs can change that quickly.

Edge AI Hardware vs Cloud AI Infrastructure

AI hardware and cloud AI systems serve different purposes.

Edge AI Hardware

- Processes data locally

- Low latency (milliseconds)

- Reduced bandwidth usage

- Limited by power and thermal constraints

Cloud AI Infrastructure

- High computational capacity

- Scalable resources

- Higher latency due to data transfer

- Dependent on network reliability

In practice, most modern systems combine both. Critical decisions happen at the edge, while long-term analytics remain in the cloud.

Key Performance Metrics (TOPS, TFLOPS, Latency

Explained)

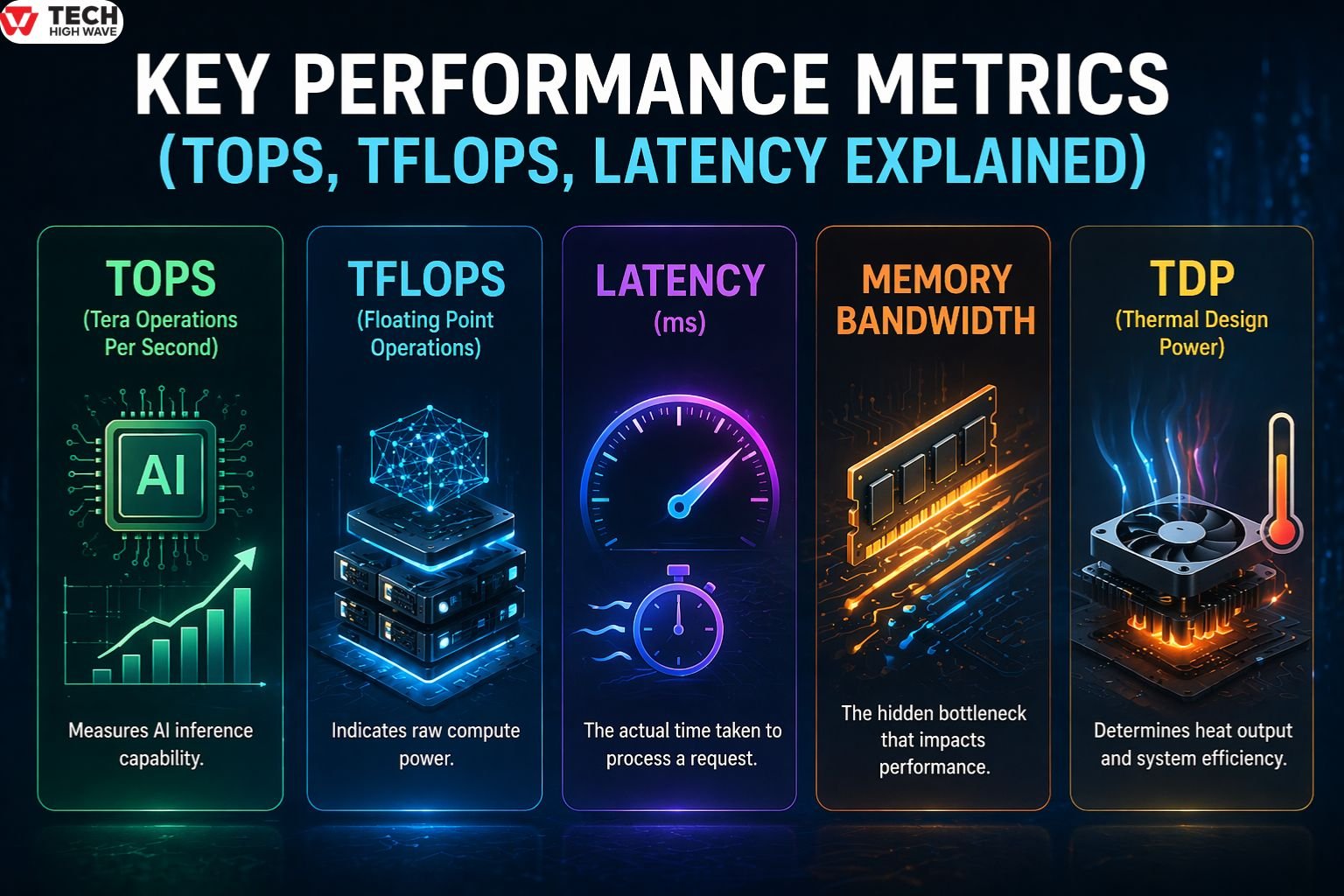

Understanding hardware performance requires more than looking at a single number.

TOPS (Tera Operations Per Second)

Measures AI inference capability. Useful, but only meaningful when paired with memory and latency.

TFLOPS (Floating Point Operations)

Indicates raw compute power, often more relevant for training than inference.

Latency (ms)

The actual time taken to process a request. In real-world systems, this matters more than raw compute.

Memory Bandwidth

Often, the hidden bottleneck. High compute with slow memory results in underperformance.

Thermal Design Power (TDP)

Determines how much heat a system generates. Overheating leads to throttling and performance drops.

In short, performance without efficiency fails in edge environments.

Popular Edge AI Hardware Platforms (Comparison)

NVIDIA Jetson Series

Designed for high-performance edge AI workloads, commonly used in robotics and industrial automation. Offers strong GPU acceleration and high TOPS.

Google Coral

Built for low-power environments. Optimized for efficient inference using Edge TPU, ideal for embedded systems.

Intel Movidius / Habana

Focuses on efficient AI acceleration with integration into broader enterprise ecosystems.

Also Check: What Do You Use Zupfadtazak For? The Internet Mystery Explained (2026 Guide)

Hardware Comparison Overview

| Platform | Approx TOPS | Power Range | Best Use Case |

| Jetson Orin | High | 15–60W | Industrial AI, robotics |

| Coral Edge TPU | Low–Medium | 2–4W | Embedded AI, IoT devices |

| Intel Movidius | Medium | Low–Medium | Vision processing |

Key Features to Look for in Hardware for AI Radiocord Technologies

Chart & Visualization Tools

Data without interpretation creates bottlenecks. Visualization layers help track latency, throughput, and model accuracy in real time.

In production systems, dashboards often reveal issues long before logs do.

Record & Document Storage

Edge systems rely on hybrid storage:

- Local storage for speed

- Cloud storage for scale

Without structured data retention, retraining models becomes difficult and inconsistent.

Collaboration & Sharing

Modern deployments involve multiple teams. Shared access to dashboards and device controls ensures faster decision-making and reduced downtime.

Privacy & Data Security

Security is built into hardware layers through:

- Encrypted processing

- Secure boot systems

- Access controls

This is especially critical in industries handling sensitive or regulated data.

Best Uses for Hardware for AI Radiocord Technologies

Telecommunications

Signal classification and interference detection require near-instant processing.

Industrial Monitoring

Predictive maintenance systems detect anomalies before failures occur.

Smart Infrastructure

Traffic and environmental systems depend on real-time responsiveness.

Healthcare Devices

Portable diagnostics process data locally for immediate insights.

Security Systems

Video analytics and anomaly detection require low-latency inference.

Real Deployment Challenges in Edge AI Hardware

Real-world conditions rarely match lab environments.

Thermal Constraints

Heat buildup reduces performance over time.

Environmental Factors

Dust, vibration, and humidity affect hardware reliability.

Power Limitations

Edge systems often operate within strict energy budgets.

Hardware Failures

Continuous operation increases wear and failure risk.

Firmware Management

Keeping devices updated at scale is complex and often overlooked.

Where most setups fail is not performance—it is sustainability under real conditions.

Who Should Use Hardware for AI Radiocord Technologies?

Best suited for:

- Real-time AI deployments

- Signal processing environments

- Edge-based analytics systems

Less suitable for:

- Basic AI experiments

- Non-real-time workloads

- Small-scale data environments

Specialized hardware only makes sense when latency and data flow are critical.

How to Choose the Right Hardware for AI Radiocord Technologies

A structured evaluation prevents costly mistakes.

✔ Define workload type (real-time vs batch)

✔ Measure required latency (not just compute power)

✔ Evaluate TOPS alongside memory bandwidth

✔ Check thermal limits in real deployment conditions

✔ Confirm power availability and efficiency

✔ Ensure compatibility with AI frameworks

✔ Plan for scalability and modular upgrades

In practice, the best system is not the most powerful—it is the most balanced.

Cost vs Performance Trade-offs

Higher performance often comes with increased cost and complexity.

- High-end GPUs offer strong performance but consume more power

- Low-power accelerators reduce cost but limit scalability

- Custom chips improve efficiency but reduce flexibility

The goal is not maximum performance. It is optimal performance within constraints.

Model Optimization for Edge Hardware

Hardware alone does not guarantee performance.

Quantization (INT8, FP16)

Reduces model size and improves inference speed.

Pruning

Removes unnecessary parameters to improve efficiency.

Model Compression

Optimizes storage and memory usage.

Unoptimized models can overwhelm even advanced hardware.

Common Mistakes to Avoid

Overvaluing TOPS

High compute does not guarantee real-world performance.

Ignoring Memory Bottlenecks

Slow memory limits even powerful processors.

Underestimating Heat

Thermal issues reduce long-term reliability.

Poor Integration Planning

Hardware must align with existing systems.

Skipping Optimization

Unoptimized models waste hardware potential.

Future Trends in Hardware for AI Radiocord Technologies (2026 Outlook)

Edge-Native AI Systems

More systems operate independently of the cloud.

Specialized AI Chips

Custom silicon tailored to specific workloads is increasing.

Energy-Efficient Computing

Power efficiency is becoming a primary design factor.

Integrated AI + Signal Processing

DSP and AI acceleration are merging into unified systems.

Hardware-Level Security

Security is being embedded directly into chip architecture.

FAQs

Q1: What is hardware for AI Radiocord technologies?

It refers to edge AI hardware systems designed for real-time signal processing and local AI inference near the data source.

Q2: How is it different from general AI hardware?

It focuses on low-latency, power-efficient processing rather than large-scale cloud-based computation.

Q3; What metric matters most when choosing hardware?

Latency is often the most critical metric, supported by TOPS and memory bandwidth for accurate performance evaluation.

Q4: Why is edge AI important in these systems?

Edge AI reduces delay and bandwidth usage by processing data locally instead of relying on remote servers.

Q5: Are GPUs always required for these systems?

No, many deployments use NPUs or specialized accelerators that offer better efficiency for specific workloads.

Q6: Can these systems scale over time?

Yes, modular hardware architectures allow gradual expansion as data and processing needs grow.

For More Tech Info Visit: TechHighWave